Digital Contexts: How Communities Self-Archive Online

This text is adapted from a talk given at the Is This Permanence Symposium on May 11, 2018.

As you can see, this talk is called How Communities Self-Archive online. I promise I’ll get to that in just a minute. But first: Cory Arcangel.

Cory Arcangel is an artist who, among other things, is known for making romhacks; that is, taking a commercial video game and modifying the code. Some examples of romhacks he made are Super Mario Clouds, where he removed everything from the screen on Super Mario Brothers except the sky and the clouds, and I Shot Andy Warhol, a mod of the light gun game Hogan’s Alley where he replaced the characters players are supposed to shoot with people like Flava Flav and the Pope. Although he developed these on emulators, for the finished pieces he wrote modified code to the EPROMs and replaced the hardwired chips on physical game cartridges with his own.

The thread I want to pick up here is something that Arcangel said about his romhacks at the Seeing Double exhibition in 2004, that he learned how to make these mods from being part of homebrew culture and it’s important to him to give the code he’s written back to the community. I think this is a great goal and that artists in general should more explicitly try to place their work in the context of the cultures that have contributed to it, so I wanted to see what his documentation looked like.

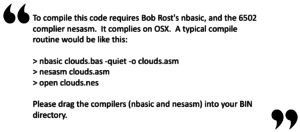

For Super Mario Clouds Arcangel’s site has his source code, a writeup of how he physically replaced the ROM chips on the cartridge, and some example videos showing what the piece does. The code includes a readme file, which we’ll get back to in a moment, but for now I’ll just call out that the published code is for Super Mario Clouds 2k9, not the original version. I’m guessing some of you will find that interesting.

Documentation on I Shot Andy Warhol is also available on Arcangel’s site, particularly in one issue of “The Source,” zine–though I’d call that geared more toward an art audience than a romhacker audience. Part of the reason I say that is that several pages of that issue are just a printout of the hexadecimal code he modified. There might be more painful ways to disseminate code to people who want to use it, but I can’t think of many off the top of my head.

Before going further, I want to be clear that I’m not knocking Arcangel here. He’s gone out of his way to document for the romhacker community in a way that many others wouldn’t. But I want to look at these papers through a lens of how well they meet the goal that he’s implying here, which is perpetuation of homebrew culture. I think there are multiple ways that might be interpreted, though.

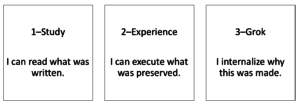

The most basic version allows someone to study the documentation, which should persist until all those fun things like bit rot, missing links, and format drift kick in. Arcangel’s site does that, at least for now. The second stage emulates the experience of the piece, to greater or lesser degrees*; you can download executable code from Arcangel’s site and run it, though you have to dig up an NES emulator. That’s not too hard to do either, at least for now.

The third level is how I’m interpreting Arcangel’s desire to give back to the community. He wants to perpetuate homebrew culture, and in order to do that someone would have to truly grok the piece. Preservation with this goal wouldn’t just emulate the experience of watching the piece, it would emulate the experience of creating the piece–technically, socially, or in any other way you might construe creation–because that’s what the community does.

Things get dicey at that third level. Go back to Arcangel’s readme file here. Nbasic and nesasm are required to compile Super Mario Clouds. Someone naively preserving Arcangel’s papers or work may be able to download a rom from his site and run it on an emulator, but the site’s documentation will not fulfill his goal of perpetuating homebrew culture because anyone who tries to act on it will run into a dead end. While I’m willing to fudge a bit on that second goal and say a generic NES emulator isn’t hard to find, the toolchain he’s talking about is more obscure unless you are already part of the community.

Worse, even though it’s the one that Arcangel specifically mentioned, the romhacker perspective is only one way of grokking the piece. Super Mario Clouds may have a different significance for indie developers or art historians and those perspectives have different externalities. Of course, trying to accommodate every possible perspective isn’t exactly practical. So now, even though I’m totally blaming Arcangel for my taking a simple statement and blowing it up, by pulling on that loose thread I’ve set a goal that isn’t realistic and started unraveling this documentation. The problem is, that though it’s impractical, I think it’s actually a pretty good goal. So lately I’ve been trying to chase down ways to make it happen, and I think that various offshoots and flavors of romhacker communities have some ideas worth looking at.*

Romhackers are already a self-archiving community. Online communities in general, and game modders particularly, are petri dishes for preservation practices. When Arcangel is talking about homebrew culture, part of what he’s talking about are sites like romhacking.net, shown on the left in wonderful 2002-o-vision by the Wayback Machine.* The document on the right is a page from NESDoc, a key reference used by romhackers around the time Arcangel was working. Even though it isn’t on Arcangel’s site, and even though NESDoc never mentions Super Mario Clouds, if the goal is groking the community then NESDoc has to be part of your archive. The community gets that–even though the 2002 site says it was old and out of date back then, it’s still around today because the community perpetuates it.

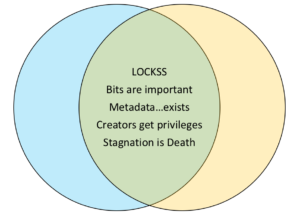

So, how do online communities persist, and how might we adapt what they do to meet the totally unrealistic goal that I set? First, there are some things archives have in common with online communities.

- LOCKSS: Lots of copies keeps stuff safe.

- Bits are important: without bits digital kind of falls apart.

- Metadata exists: …but is less important in communities because of the nature of communities. Metadata is for people who don’t grok data.

- Creators get special regard when talking about the things they’ve created

- Stagnation is Death: An artifact that is just cataloged and put in a directory for download isn’t going to be maintained at the same level as one that has active users.

Those are a few common points, but for the rest of my time I want to talk about what archives can learn from communities. And really I want to talk about this in terms of principles because the principles are really what are portable between the two.

Principle: Synthetic Preservation

Community sites don’t have writers or videographers to come up with new stuff: they have to constantly crowdsource new content or people wander away and they stop being communities. But crowdsourcing here means something different than in an archive: the archives I’ve seen use crowdsourcing generally aim to collect highly structured data from a wide variety of people. The online community version of crowdsourcing reverses that by collecting a wide variety of data from a structured set of people-the ones who have gained the trust of the community.

Community sites don’t have writers or videographers to come up with new stuff: they have to constantly crowdsource new content or people wander away and they stop being communities. But crowdsourcing here means something different than in an archive: the archives I’ve seen use crowdsourcing generally aim to collect highly structured data from a wide variety of people. The online community version of crowdsourcing reverses that by collecting a wide variety of data from a structured set of people-the ones who have gained the trust of the community.

For example, when romhackers started to make what are known as Kaizo games–these are games that have been modified to be incredibly difficult to complete–they didn’t put a survey out in the field asking what stock levels in the game were hardest. They used their own experience, they looked at videos of other people playing, they talked to a subgroup of gamers called TASsers who play games by recording frame-by-frame inputs, and overall got a multifaceted view of document they were working with; the game itself. If the goal of traditional crowdsourcing is finding truth through consensus, the goal of community crowdsourcing is finding truth through synthesis.

Acknowledging synthesis as a fundamental goal of archives can make people uncomfortable, but there are ways to make it work. At the Media Ecology Project we’ve been intentionally removing structure from our film description ontologies and enforcing only the most basic relationships.* We don’t assume that one interpreter of a film will value the same data or significant properties as the next, we just take their data at face value and relate it to the film. The result has been that we’ve created conversations between disparate data, like human annotation of gestures or algorithmic analysis of optical flow. The data is only consistent within its own type–we basically use custom ontologies and RDF glue. The result is an archive that’s inconsistent and, from a cataloging perspective, incomplete; but that’s ok when you value groking over finding.

Principle: Living Documents

Everything in an online community is a living document of one sort or another. They discover their system needs more data, or resources, or links when somebody tries to use it in new ways. Even the community medium, which in the time I’m talking about was web forum software,* is built around a constantly updating page.

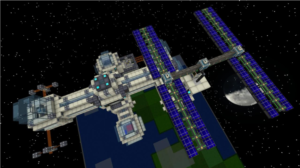

Minecraft modding communities, for instance, have adapted not just to changes in the game itself but also to changing expectations for what Minecraft should be. If a modder wants to build a space station, great – but first they need to build space, and once one person builds space it’s there for everyone to use. The document has changed; but again, that’s ok. They can archive the old bits while the community migrates to new norms. A model of digital preservation that accepts moving targets is more likely to end up with preserved materials that actually get used.

One side note since I mentioned forums: I think everyone in this room is pretty familiar with the predicted digital dark age and one reason we’re here is to mitigate it as best we can. In the early days of the web, community conversations were built on text that will be relatively easy to recover. Now, though, the daily communications are moving to ephemeral voice platforms like Discord while the artifacts these communities are creating are getting more complex and fragile. That trend points less toward a coming dark age and more toward a coming posthistoric age.

Principle: Embrace Imbalance

Beyond platforms, though, communities are themselves in a constant state of flux. Individuals members come and go from the community all the time and even long-term members have waxing and waning interest. Where institutions see flux as a problem–think of the last time a long-term staff member left their job–communities recognize it as inevitable and produce something useful out of it.

In fact, one reason why online communites become self-archiving is to deal with flux. Say somebody new shows up at your forum and wants to join the conversation. Over the years the regulars have written community documentation, like this wiki that is attached to a community called Tetrisconcept, and the newbie just gets told to go read it. Even though there is plenty of reference material that’s useful to longstanding member, the wiki is written for outsiders because it’s about bringing new people up to speed. Community is like energy – it does work when flowing across an imbalance.

In archives we talk a lot about data silos, but I think perspective silos are just as destructive. When we create metadata or analysis exclusively for highly specialized audiences we’re not addressing the imbalance between expert and neophyte and we’re missing an opportunity to regenerate our own communities of use. Just like a community that becomes insular, preservation without outreach is unsustainable.

Principle: Community First

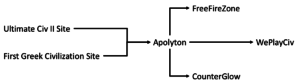

As the saying goes, home isn’t a place, it’s the people around you. That rule applies to online communities as well; for instance, a community that has been modding the Sid Meier’s Civilization series of games has migrated en masse between different forums several times over the course of the last twenty years.* It’s taken the mods it’s created with it each time – you can still download mods uploaded to the long-defunct Ultimate Civ II Site today. Even though the name and location changed, the community itself continued.

What can that mean for archives, though? This one is really hard because it goes to the core of institutions that have practical problems like paying employees; I don’t think any of us want that to stop. But the principle is that the digital documents we’re preserving are a public good. We need to be willing to let the things we preserve leave our institutional control when that is what’s needed to sustain them. Whether that means opening up access, or participation in disciplinary networks, or feeding aggregators, or anything else that can be accomplished without completely undermining our ability to keep preserving new things, is up to each institution; but whatever we can do to make preserved data portable between institutions will help it survive.

Principle: Shared Experience

I want to end on this principle because I think it’s actually the most important one I’ll talk about today. Communities are built on shared experience. Ok, that’s not exactly a revelation, but it’s worth looking at what that means to these modding communities in part because they are premised on the continuous deconstruction and rebuilding of their own history. Romhackers know and care about the games they’re editing as historical documents but they’re also constantly changing those same documents in ways that add new meaning and context to them. In some cases it’s impossible to grok a mod made today without knowing how the understanding of the original game has changed over decades of mods–it’s the historical equivalent of calculating integrals in calculus.

The response in some parts of the community has been to explicitly document the progression of that understanding. There’s a really interesting online community that I’d probably call romhacker-adjacent called speedrunners.* These people play games with the goal of getting to the end as fast as possible by any means necessary – exploiting a bug is just as legal as anything in the game manual. People have started documenting how speed records drop with the discovery of new bugs and techniques by producing videos historicizing those moments.

I think it’s important to point out that the relationship between speedrunners and documentarians like SummoningSalt here is different than the relationships between artists and archivists or between archives and scholars. Although there are occasionally tensions over perceived content farming, speedrunners and documentarians are developing a symbiotic relationship. Creating historicized documentation of speedrunning is starting to reflexively change speedrunning itself because the documentation isn’t coming from outside the community, it’s coming from inside it.

I mentioned my work with the Media Ecology project earlier; one thing we talk about a lot is creating a virtuous cycle where scholarship improves the understanding and discoverability of studied material in its source archive. In turn, broader metadata in archives and greater visibility leads to more connected research. The speedrunning community has begun to embody this principle because they have opened up their definition of what it means to be part of the speedrunning community. Individuals may have different roles, but they are mostly thought of as part of the same “us”. I’m not sure the same is true of archivists, artists, preservationists, and scholars; the self-identification with a particular group, inherent power imbalances, and the forces of knowledge and reputation economies can get all get in the way.

From a preservation perspective, that means we need to build systems that recognize we work within those knowledge and reputation economies; not as a afterthought, but baked right into the epistemology of the information systems, processes, and workflows we create. Though I’m sometimes critical of identity around digital humanities as a discipline, I do think that it’s starting to become useful as a way to overwrite identifications that get in the way of that virtuous cycle. The kind of interdisciplinary investigator DH suggests, though, means that things like highly granular attribution and provenance are as important for perpetuating our ability to do preservation work as they are for establishing ground truth about artifacts.

I’ll end this discussion with an open question: right now our ontologies are designed to support artifacts in archives. How can we design ontologies that support people working in archives, and what would adopting that perspective let us do to promote successful digital preservation?

Notes

- The software component, at least. Museum installations have specific layouts and include physical components.

- For these examples I’m focusing more on the ‘community’ part than the ‘romhacker’ part; the examples I use are more broadly drawn from communities who do interesting things with video games.

- This is a generic example from the community; a true preservation project would talk to Arcangel to determine if this page was something he actually looked at.

- This is the linked data paradigm; limit individual metadata records to specific concerns and connect them together to get a big-picture view of an artifact.

- Or IRC, which is even more dynamic.

- It’s worth noting that this is just one fragment of an even larger set of sites that often share membership.

- Most of the communities discussed here have some overlap, but speedrunners are primarily focused on playing the games or modding them into tools that help improve gameplay so they’re a bit of an outlier. The two communities complement one another.